UG Kids Lab

Building AI for children requires building it with children.

Purpose & Vision

Conversational AI for children is a fundamentally new style of play. There's no existing playbook, no decades of research on how kids interact with AI characters, how they test boundaries, how they build (or lose) trust with a synthetic voice.

The Kids Lab exists because assumptions fail. What adults think children will say, how they think children will react, what they think will hold attention: it's almost always wrong. Real children mumble, interrupt, invent words, get distracted, push limits, and surprise you. You can't design for that in a vacuum.

We answer UX questions. We collect data. And we ensure that data is annotated to reinforce our system's learning.

UX Research Areas

The domains we explore to understand how children actually interact with AI.

Voice UX

How children speak to AI: tone, pacing, vocabulary, and the unique acoustic patterns of young voices in real environments.

Conversational UX

Turn-taking, interruption handling, topic shifts, and how children expect dialogue to flow versus how adults design it.

Responsible AI Design

Age-appropriate responses, safety boundaries, and building trust without manipulation or dark patterns.

Open vs. Limited Conversations

When to let children explore freely versus when to guide them within structured boundaries, and how to transition between modes.

What We Collect

Data that trains and improves our systems across three critical domains.

ASR (Automatic Speech Recognition)

Child voice data across modalities, hardware types, ages, accents, and language abilities. Off-the-shelf ASR fails with children, so we build the training data to fix that.

Safety for Parents & Kids

Real examples of boundary-testing, sensitive disclosures, and edge cases. What children actually say when they push limits, and how parents respond.

Conversational Design

Dialogue patterns, engagement signals, confusion markers, and the moments where interactions succeed or fail.

Data Processing & Transcription

How we collect, process, and use child speech data, ethically and transparently.

Consent-First Collection

Every session begins with explicit parental consent. Parents understand what's being recorded, why, and how it will be used.

Secure Capture

Audio is captured through our SDK and transmitted via encrypted channels. Raw audio never touches client servers.

Transcription & Annotation

Speech is transcribed by child-trained ASR. Human annotators review and correct to continuously improve accuracy.

PII Reduction

Personal information is automatically detected and removed. Names, locations, and identifying details are stripped.

Anonymization

Data is anonymized and aggregated. No identity-level profiles. Individual sessions can't be traced to specific children.

Model Training

Processed data improves our ASR and safety models. Better accuracy for all children, not surveillance of any individual.

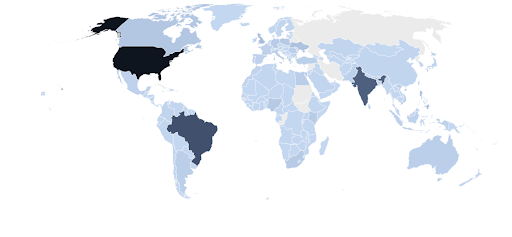

Tested Around the World

Kids Lab sessions span internationally and across 30+ US states, capturing the full diversity of how children interact with conversational AI, including different accents, languages, and cultural contexts.

How We Test & Gather Data

Five distinct methodologies to capture the full range of child-AI interactions, from controlled experiments to messy real-world chaos.

WOO (Wizard of Oz)

Human-in-the-loop simulations where researchers control responses in real-time. A researcher listens and responds as the AI, learning exactly how children phrase requests, handle confusion, and push boundaries.

MOO (Model of Oz)

AI-driven interactions with human oversight and intervention capability. The AI handles the conversation while researchers monitor and can step in when needed, testing real model behavior with a safety net.

Home Play

Natural environment testing in family settings where children interact organically. No lab, no observers hovering. Just real kids in their own space, with all the distractions, interruptions, and chaos that entails.

Remote App Testing

Distributed testing at scale across diverse geographic and demographic groups. Families sign up, receive the app, and test from anywhere, capturing data from 30+ states and multiple countries.

Daycares & Schools

Structured group settings with diverse participants and controlled observation. Testing in real classrooms with real noise, real peer dynamics, and real teachers: the environments where many AI products will actually be used.

Applied Research

Real examples of how Kids Lab research directly improves our products.

Classroom-Scale Voice Interaction in Noisy Environments

Context: An ESL education provider approached UG Labs with a core classroom challenge: enabling 20–30 children to interact with AI simultaneously in a noisy school environment.

What We Tested: Kids Lab conducted controlled and in-class testing of multiple microphone types and Voice Activity Detection (VAD) configurations, including push-to-talk, default VAD, and custom timeout-based VAD designed to close microphones even under constant background noise. Testing was conducted across three classrooms with up to 30 children using tablets concurrently.

Outcome: We demonstrated that, with the right hardware and VAD configuration, large-scale simultaneous voice interaction is feasible in real classrooms without degradation of experience.

Why It Matters: This work enabled clear deployment guidelines for embedding UG-powered interactive experiences into schools and group learning environments.

Large-Scale Safety Red Teaming and Taxonomy Development

Context: After establishing baseline safety guardrails, UG Labs sought to understand how real users attempt to bypass AI safety systems, how they define inappropriate behavior, and how resilient the system is under sustained pressure.

What We Tested: Kids Lab ran a large-scale red teaming exercise with 1,000 participants: 500 teenagers and 500 adults. Participants were asked to try to provoke unsafe or inappropriate responses, with intentionally vague instructions to capture natural behavior.

Outcome: No severe safety incidents occurred, unlike what is commonly observed when similar tests are run against off-the-shelf LLMs. The data enabled detailed analysis of which responses were effective, which were suboptimal, and where user expectations diverged from system behavior.

Why It Matters: This work both validated the robustness of UG's safety guardrails under real-world pressure and formed a core foundation of our evolving safety taxonomy, informing detection, classification, and response design across all UG-powered products.

Pre-K Language Development and Child ASR Research

Context: Early testing revealed that speech recognition accuracy drops sharply for children under six, often breaking conversational flow and causing frustration that prevents sustained interaction.

What We Tested: In partnership with Boise State University's Kidsteam HCI Lab, Kids Lab ran a large-scale data collection program across eight daycare centers and fifteen families. Custom interactive hardware was deployed, enabling structured and open-ended conversational play with children aged 3–5.

Outcome: Over 1,800 sessions of real child–AI interaction were collected. All audio data was annotated and tagged by linguists alongside child development specialists, pedagogues, and HCI researchers. This resulted in both actionable UX guidelines for pre-K conversational design and substantial improvements to UG's child-specific ASR models.

Why It Matters: UG's speech recognition performance for pre-K users now surpasses off-the-shelf models, making conversational AI viable for younger children and enabling entirely new categories of age-appropriate AI experiences.

Come build with us

Our infrastructure is built on real research with real children. Get in touch.

Get in Touch